Edge computing and cloud computing are two fundamental paradigms shaping modern embedded systems and connected products. While cloud computing centralizes processing in remote data centers, edge computing pushes computation closer to the data source, often directly onto devices or local gateways.

As devices generate increasing volumes of real-time data, understanding the trade-offs between edge and cloud is critical for building scalable, responsive, and cost-efficient systems.

Optimize the performance of your hardware and software with computing, leveraging Distributed Computing, AI, and Machine Learning.

Discover our edge computing and Machine Learning servicesTechnical Explanation

What is Cloud Computing?

Cloud computing relies on centralized infrastructure; large-scale data centers operated by providers like AWS, Azure, or Google Cloud. Devices send data over the internet to the cloud, where it is processed, stored, and analyzed.

Key characteristics:

- Centralized processing

- Virtually unlimited scalability

- High computational power

- Strong support for analytics, AI, and storage

Typical workflow:

- Device collects data (sensor, camera, etc.)

- Data is transmitted via network

- Cloud processes and stores data

- Results are sent back to the device or dashboard

What is Edge Computing?

Edge computing moves computation closer to where data is generated; on embedded devices, gateways, or local servers. Instead of sending all data to the cloud, processing happens locally.

Key characteristics:

- Decentralized architecture

- Low latency

- Reduced bandwidth usage

- Improved reliability in offline scenarios

Typical workflow:

- The device collects data

- Local processing occurs (e.g., filtering, inference)

- Only relevant data is sent to the cloud (optional)

Core Architectural Differences

| Aspect | Cloud Computing | Edge Computing |

|---|---|---|

| Processing Location | Remote data centers | Near data source (device/gateway) |

| Latency | Higher (network-dependent) | Very low |

| Bandwidth Usage | High | Reduced |

| Scalability | Virtually unlimited | Limited by local hardware |

| Reliability | Depends on connectivity | Can operate offline |

| Security Scope | Centralized | Distributed (more complex) |

Key Technical Challenges

Cloud Computing Challenges:

- Network latency for real-time systems

- Bandwidth costs for high-frequency data streams

- Dependency on connectivity

- Data privacy concerns (especially in regulated industries)

Edge Computing Challenges:

- Limited compute and storage resources

- Device management complexity

- Security across distributed nodes

- Firmware update orchestration (often requiring robust firmware development practices)

Applications & Industry Relevance

IoT and Smart Devices

In IoT systems, edge computing is often essential for real-time responsiveness. For example:

- Smart sensors filter noise locally before sending data

- Industrial gateways perform anomaly detection on-site

Cloud computing complements this by:

- Aggregating data across devices

- Running large-scale analytics or machine learning pipelines

Industrial Automation

Manufacturing systems demand low latency and high reliability. Edge computing enables:

- Real-time control loops

- Predictive maintenance at the machine level

Cloud systems are used for:

- Fleet-level monitoring

- Historical data analysis

Automotive Systems

Modern vehicles generate massive sensor data (cameras, LiDAR, radar). Edge computing is critical for:

- Autonomous driving decisions

- Safety-critical functions

Cloud computing supports:

- OTA updates

- Fleet analytics

- Model training for ADAS systems

Medical Devices

In healthcare, latency and privacy are crucial:

- Edge processing enables immediate response (e.g., patient monitoring devices)

- Cloud systems provide long-term storage and analytics

Consumer Electronics

Devices like smart home systems use a hybrid approach:

- Edge: voice recognition, local automation

- Cloud: updates, personalization, AI model improvements

Edge Computing vs Cloud Computing: When to Use Each

Use Edge Computing When:

- You need real-time decision-making (e.g., robotics, autonomous systems)

- Network connectivity is unreliable or intermittent

- Data privacy regulations require local processing

- Bandwidth costs must be minimized

Use Cloud Computing When:

- You require large-scale data processing or storage

- Centralized management and analytics are needed

- AI/ML model training is required

- System scalability is a priority

Hybrid Architecture: Best of Both Worlds

In practice, most modern systems use a hybrid approach:

- Edge handles real-time processing and filtering

- Cloud manages aggregation, analytics, and orchestration

Example architecture:

- Embedded device → local inference (edge)

- Gateway → aggregation

- Cloud → long-term analytics + dashboards

This approach balances performance, cost, and scalability.

Best Practices for Implementation

Designing an Edge + Cloud System

- Define latency requirements early

- Partition workloads between edge and cloud

- Optimize data pipelines (filter, compress, batch)

- Ensure secure communication (TLS, device authentication)

- Plan for remote updates (OTA infrastructure)

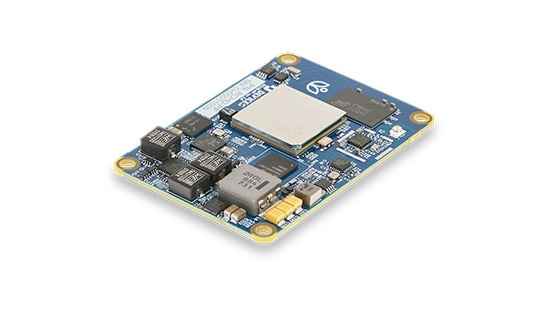

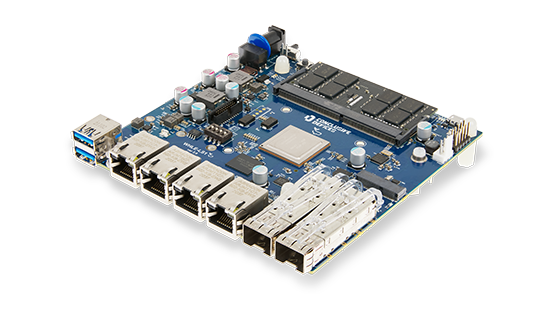

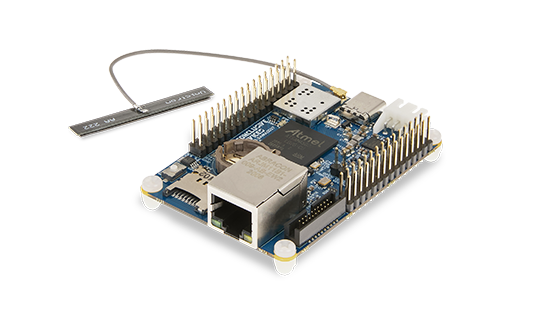

Hardware Considerations

Edge computing requires careful hardware design:

- CPU/GPU/AI accelerator selection

- Power constraints (especially in battery-powered devices)

- Thermal management

For more on this, see PCB design and embedded hardware optimization strategies.

Software & Firmware Strategy

- Modular firmware architecture

- Robust update mechanisms (OTA)

- Edge AI frameworks (e.g., TensorFlow Lite, ONNX Runtime)

- Integration with cloud APIs

This is where strong expertise in firmware development becomes critical for maintaining reliability at scale.

Common Mistakes

- Overloading the edge: Trying to run heavy workloads on constrained devices

- Ignoring network variability: Assuming stable connectivity

- Poor data filtering: Sending unnecessary raw data to the cloud

- Weak security model: Not accounting for distributed attack surfaces

- Lack of update strategy: Failing to support OTA updates

Edge Computing vs Cloud Computing: FAQs

What is the main difference between edge and cloud computing?

The main difference lies in where data processing occurs: edge computing processes data locally near the source, while cloud computing processes data in centralized remote servers.

Is edge computing replacing cloud computing?

No. Edge computing complements cloud computing. Most systems use a hybrid model combining both approaches.

Which is better for IoT systems?

It depends on the use case:

- Edge is better for real-time processing

- Cloud is better for analytics and storage

Does edge computing improve security?

It can improve privacy by keeping data local, but it also introduces new challenges due to distributed infrastructure.

Can AI run on edge devices?

Yes. Edge AI is increasingly common, using optimized models and hardware accelerators for local inference.

Conclusion

Edge computing and cloud computing are not competing paradigms; they are complementary building blocks of modern embedded systems. The choice between them depends on latency requirements, data volume, connectivity, and system complexity.

For engineering teams in IoT, automotive, and industrial domains, the real challenge lies in designing the right system architecture, one that intelligently distributes workloads between edge and cloud. This requires expertise across embedded software, hardware design, and scalable backend systems.

At Conclusive Engineering, we specialize in building robust embedded solutions that leverage both edge and cloud capabilities, from firmware development to full-stack system integration. If you're designing a connected product, understanding and applying these paradigms effectively can be the difference between a prototype and a production-ready system.