Ethernet switches are fundamental building blocks of modern networks, from industrial automation systems to IoT deployments and embedded platforms. A common question among engineers and technical decision-makers is: does an Ethernet switch reduce network speed?

The short answer is: not inherently. But it depends on architecture, configuration, and workload.

In embedded systems and product development, network performance directly affects latency, determinism, and data throughput. Whether you're designing an industrial gateway, automotive ECU network, or edge computing device, understanding how switches impact performance is critical. Misconceptions around switching often lead to suboptimal designs, unnecessary hardware costs, or bottlenecks in production systems.

This article explains how Ethernet switches work, when they can affect speed, and how to design networks that maintain optimal performance.

At Conclusive Engineering, we specialize in electronic hardware.

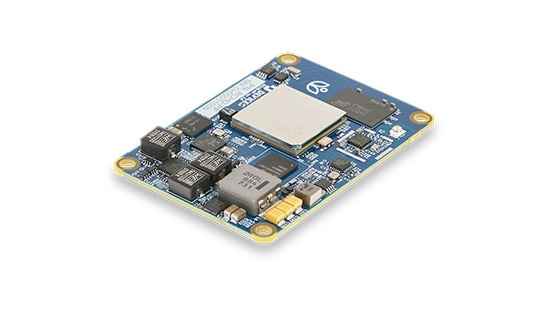

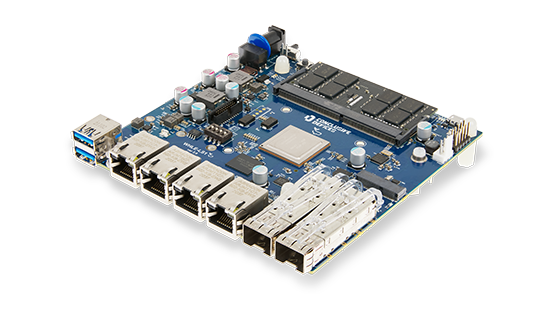

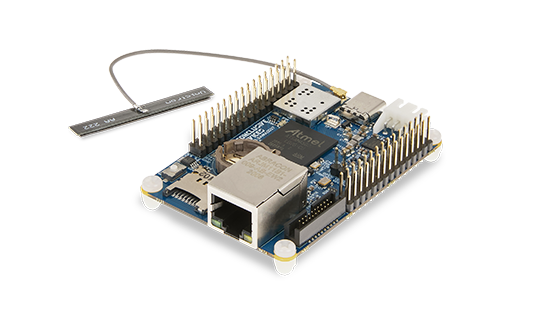

Built a custom Ethernet switch with usTechnical Explanation: How Ethernet Switches Affect Speed

How an Ethernet Switch Works

An Ethernet switch operates at Layer 2 (Data Link Layer) and forwards frames based on MAC addresses. Unlike hubs, which broadcast traffic to all ports, switches create dedicated communication paths between devices.

Modern switches use:

- Store-and-forward switching (checks frame integrity before forwarding)

- Cut-through switching (forwards frames with minimal delay)

- Full-duplex communication (simultaneous send/receive)

In ideal conditions, switches do not reduce speed. Instead, they increase network efficiency by eliminating collisions and segmenting traffic.

When an Ethernet Switch Does NOT Reduce Speed

A properly designed switch:

- Supports line-rate forwarding (e.g., 1 Gbps per port)

- Has sufficient backplane bandwidth

- Uses non-blocking architecture

Example: An 8-port Gigabit switch with a 16 Gbps switching capacity can handle full throughput on all ports simultaneously without slowdown.

In such cases:

- No packet loss occurs

- Latency remains minimal (microseconds)

- Throughput matches link speed

When a Switch CAN Reduce Speed

While switches are designed for efficiency, several factors can introduce bottlenecks:

1. Oversubscription

If total incoming traffic exceeds the switch’s internal bandwidth:

- Packets queue up

- Latency increases

- Throughput drops

Example: 24 × 1 Gbps ports feeding into a 10 Gbps uplink → congestion point

2. Limited Backplane Capacity

The switching fabric (backplane) determines how much data can move internally.

- Low-end switches may not support full line-rate traffic

- Embedded switches often trade cost for performance

3. Buffer Size Limitations

Small buffers can cause:

- Packet drops during bursts

- TCP retransmissions

- Reduced effective throughput

Critical in:

- Industrial automation

- Real-time data acquisition

- Video streaming systems

4. Latency Sensitivity

Switches introduce processing delay:

- Store-and-forward: higher latency

- Cut-through: lower latency but less error checking

In most systems: negligible

In real-time systems (e.g., TSN): critical

5. Duplex Mismatch or Configuration Errors

Incorrect settings can cause:

- Collisions (in half-duplex)

- Retransmissions

- Severe throughput degradation

6. CPU-Based Switching (Embedded Systems)

In some embedded designs:

- Switching is handled partially by CPU

- Not hardware-accelerated

Result:

- Performance drops under load

- Increased jitter

Ethernet Switch vs Hub vs Router

Ethernet Switch vs Hub

| Feature | Switch | Hub |

| Speed | Full per-port speed | Shared bandwidth |

| Collisions | Eliminated | Frequent |

| Efficiency | High | Low |

Switches improve speed compared to hubs.

Ethernet Switch vs Router

| Feature | Switch | Router |

| OSI Layer | Layer 2 | Layer 3 |

| Function | Frame forwarding | Packet routing |

| Speed Impact | Minimal | Higher processing delay |

Best Practices: How to Avoid Speed Reduction

1. Choose Non-Blocking Switches

Look for:

- Switching capacity ≥ sum of all ports

- Example: 8 × 1 Gbps → at least 16 Gbps backplane

2. Avoid Oversubscription

- Balance uplink capacity with access ports

- Use aggregation links if needed

3. Use Hardware-Accelerated Switching

Especially for embedded systems:

- Avoid CPU-based packet handling

- Select switches with dedicated ASICs

4. Optimize Network Topology

- Minimize hop count

- Avoid unnecessary daisy-chaining

5. Enable QoS (Quality of Service)

Prioritize:

- Real-time traffic

- Control signals

- Critical data streams

6. Monitor and Test

Use:

- Packet analyzers

- Throughput testing tools

- Latency measurements

Common Mistakes

- Using cheap switches with insufficient backplane capacity.

- Ignoring uplink bottlenecks.

- Assuming all Gigabit switches perform equally.

- Overloading embedded switch chips.

- Not considering latency in real-time systems.

- Mixing half-duplex and full-duplex devices.

FAQ

Does adding more switches slow down the network?

Not significantly, unless:

- There are too many hops

- The switches are low-quality

- Latency-sensitive applications are involved

Does a Gigabit switch guarantee 1 Gbps speed?

No. It depends on:

- Backplane capacity

- Network congestion

- Device performance

Is unmanaged vs managed switch speed different?

Speed is similar, but managed switches offer:

- VLANs

- QoS

- Traffic control

These can improve effective performance.

Do Ethernet switches add latency?

Yes, but typically:

- 1-10 microseconds per switch

- Negligible for most applications

Can a switch become a bottleneck?

Yes, if:

- Oversubscribed

- Underpowered

- Poorly configured

Conclusion

So, does an Ethernet switch reduce speed?

No. When properly designed and selected, it enhances network performance rather than limiting it. However, poor configuration, low-quality hardware, or mismatched system requirements can introduce bottlenecks.

For engineers working on embedded systems, IoT platforms, or industrial devices, the key is not just choosing a switch, but understanding:

- Bandwidth requirements

- Latency constraints

- Traffic patterns

At Conclusive Engineering, we design hardware and firmware systems that integrate networking components efficiently, ensuring performance, reliability, and scalability across demanding applications.